EU AI Act Compliance Is Already Breaking Startups: What PMs Need to Know

The EU AI Act is not a future concern. It is actively reshaping products today. But treating compliance as a burden misses the point. The best Product Managers will treat it the same way they treat any product constraint: as a forcing function for better decisions.

I am bullish on AI. I have been for a while, and nothing I have seen in the last twelve months has changed that view. The pace of capability improvement is staggering, the application space is enormous, and the economic incentives are pulling in one direction: more AI, faster, everywhere.

But I have also spent enough years in product development to know that “move fast” without “think carefully” is how you end up with a mess that takes twice as long to clean up as it took to create. And right now, the EU AI Act is forcing a conversation that the industry has been avoiding.

It is not a future concern. It is already here.

The Reality on the Ground

The EU AI Act is not coming. It is already here, and the enforcement milestones are arriving fast:

Aug 2024 ─── Act entered into force

│

Feb 2025 ─── Prohibitions on unacceptable AI practices took effect

│

Aug 2025 ─── General Purpose AI model requirements took effect

│

Aug 2026 ─── ⚠ HIGH-RISK AI SYSTEM REQUIREMENTS TAKE FULL EFFECT

│

Aug 2027 ─── Full enforcement beginsThat critical August 2026 deadline is four months away.

The penalties are not theoretical. Violations of prohibited practices carry fines of up to EUR 35 million or 7% of global annual turnover, whichever is higher. High-risk violations reach EUR 15 million or 3%. Even providing incorrect information to regulators can cost EUR 7.5 million.

For a seed-stage startup, even the “proportionate caps” designed to protect SMEs can be existential. As one compliance source put it bluntly: a EUR 140,000 fine for a seed-stage company is a death sentence.

Founders on Reddit are already discussing this. One described having to “rewrite a big part of my AI-powered chatbot to meet the new regulations.” Another flagged the health AI classification problem: “the line between ‘health advice’ and ‘medical device’ is blurry and the EU is not messing around.” A third described the moment their first EU customer asked about AI Act compliance and they had nothing prepared.

This is not hypothetical risk. It is operational reality.

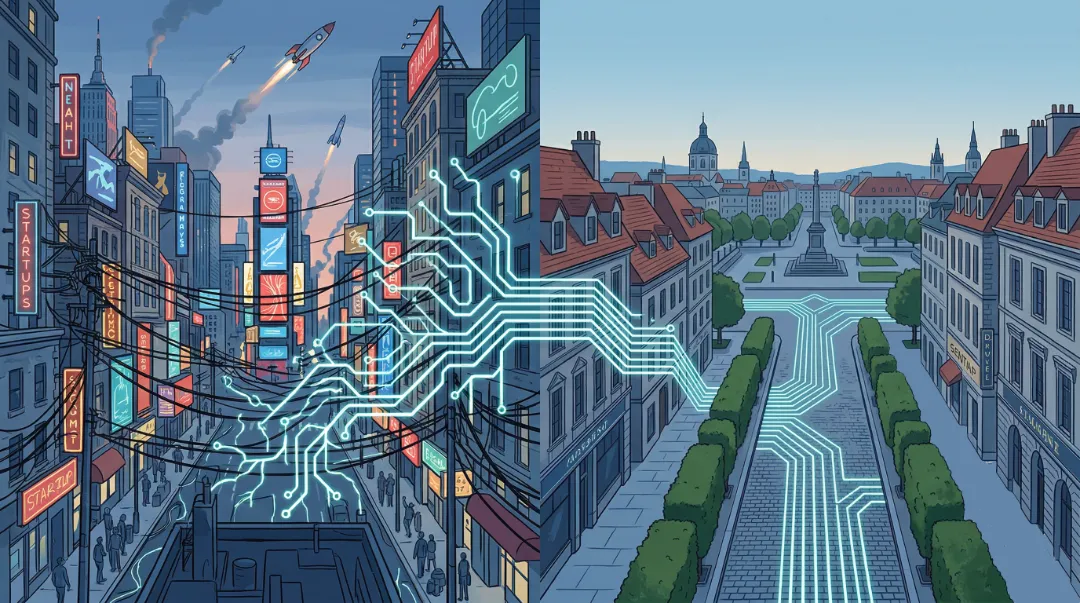

The Two Extremes

There are broadly two perspectives in this conversation, and both have a point.

The US “Wild West” argument holds that America’s relatively light-touch approach to technology regulation has been a primary engine of its dominance. Apple, Google, Amazon, Microsoft, Meta: the most valuable and influential technology companies on the planet were all built in an environment where founders could experiment, ship, iterate, and scale without navigating a compliance labyrinth first. For fifty years, US tech companies have led the world in innovation, and that track record is difficult to argue with. Whether the US still leads in every frontier, particularly AI where the debate about China’s position is genuinely open, is a fair question. But the broader pattern is clear: permissive regulatory environments have historically correlated with explosive technological growth.

The EU “precautionary” argument holds that unchecked AI development creates real harm: discriminatory hiring algorithms, opaque credit scoring, surveillance creep, and safety risks in critical infrastructure. The AI Act is an attempt to draw lines before the damage is done, not after. Proponents argue that without guardrails, we are sleepwalking into a world where AI systems make consequential decisions about people’s lives with no transparency, no accountability, and no recourse.

Both positions contain truth. And both, taken to their logical extreme, lead to bad outcomes.

The unregulated approach creates enormous value quickly, but it also creates enormous risk. When an AI system wrongly denies someone a mortgage, or a medical chatbot gives dangerous advice, or a hiring tool systematically discriminates, the harm is real and often falls on people who have no visibility into the system that affected them. “Move fast and break things” might be acceptable when you are iterating on a landing page. It is a dangerous philosophy when the “things” being broken are people’s livelihoods, health, or civil rights.

The heavily regulated approach protects citizens, but it can also strangle the innovation it claims to want. European startups have been vocal about this. Some welcomed the clarification the Act provides, but others argued the original timeline “favoured deep-pocketed American tech giants who could afford to hire armies of compliance lawyers.” When compliance costs create a structural advantage for incumbents, regulation stops being a shield and starts being a moat, just one that protects the wrong people.

A Product Manager’s Perspective

Here is where I think the conversation goes wrong. Both sides frame regulation as something that happens to product development, an external force that either helps or hinders. But if you have spent any time building products in complex environments, you know that constraints are not inherently good or bad. What matters is how you design around them.

This is exactly the same principle I wrote about in the context of agile governance. Governance is not the enemy of delivery. Bad governance is the enemy of delivery. Good governance supports the flow of value by creating guardrails that keep teams on track without dictating every step.

The EU AI Act, at its core, is asking for things that good product teams should want anyway:

- Transparency: Tell users they are interacting with AI. Explain how decisions are made. This is not a burden; it is basic product integrity.

- Explainability: If your AI system makes a consequential decision, you should be able to explain why. If you cannot, that is not a regulation problem. That is a product quality problem.

- Human oversight: For high-risk decisions, keep a human in the loop. Again, this is not radical. It is the kind of thing experienced PMs already advocate for.

- Logging and monitoring: Maintain records of how your system behaves. This is just good engineering practice dressed up in legal language.

- Risk assessment: Before you build, think about what could go wrong and for whom. This is discovery. This is what we are supposed to do.

The issue is not that these requirements exist. The issue is that they are being imposed on products that were never designed with them in mind. Retrofitting explainability into a system that was built as a black box is expensive and painful. Building it in from day one is a design decision, not a compliance project.

The Forcing Function

The tension between regulation and innovation has been a recurring theme on Lenny’s Podcast recently, and several conversations have landed on a point that I think is underappreciated: constraints do not just limit what you can build. They change how you think about what you should build.

Brian Balfour described companies that define “incredibly hard constraints” as a strategy, not a problem. One company he worked with benchmarked against competitors and set a constraint that each function would be one-fifth the size. That constraint didn’t slow them down. It forced them to find fundamentally different ways of working, including aggressive adoption of AI tooling.

Regulatory constraints can work the same way, if you let them.

Amol Avasare from Anthropic put it even more directly: “As the risks get higher and the stakes get higher, I think the fact that we are taking a stance and safety is critical to what we do, is actually going to become a significant competitive advantage.”

This is not compliance-as-cost. This is compliance-as-differentiation. In a market where trust is increasingly scarce, the company that can say “we built this responsibly, and here is the evidence” has an advantage over the one scrambling to bolt on compliance features before a deadline.

Eric Ries made a related point about AI alignment: “Everyone’s talking about AI alignment. I’d be a little more sanguine about AI alignment if the companies doing the aligning were better at aligning their human intelligences.” The Act’s explainability and transparency requirements essentially force companies to make their organisational values explicit. Ries argues this is work the tech industry has “severely underinvested” in. He is right.

Lightweight Governance, Not Bureaucratic Theatre

My position is not that the EU AI Act is perfect. It is not. The classification system is ambiguous in places. The “wellness tool vs medical device” boundary is genuinely unclear. The phased timeline has created perverse incentives, with some companies rushing to launch high-risk products before enforcement kicks in. And the compliance burden falls disproportionately on startups who can least afford it.

But the answer to imperfect regulation is not no regulation. It is better regulation. And the principles we apply in product development point the way.

In product work, we know that heavyweight governance kills velocity. Stage gates, committee approvals, and thick compliance documents slow teams to a crawl. But we also know that zero governance is chaos. Without any constraints, teams build the wrong things, accumulate unmanageable risk, and lose alignment with the broader organisation.

The sweet spot is lightweight governance: clear guardrails, empowered teams, and fast feedback loops. You define the boundaries, then give teams freedom within them. You inspect and adapt. You treat the governance model itself as a product that evolves.

AI regulation should work the same way:

- Classification should be clear and predictable. Founders should not be guessing whether their wellness app counts as a medical device. The boundaries need to be sharp enough that a product team can make a confident call during discovery, not after months of legal review.

- Compliance should be proportionate. The requirements for a chatbot recommending restaurants should not be the same as for a system making parole decisions. Risk-based tiering is the right idea, but the tiers need to be practical, not just theoretical.

- The cost should not be a moat. If compliance is so expensive that only large incumbents can afford it, the regulation is failing. Tooling, templates, and shared infrastructure for common compliance patterns would help enormously.

- The focus should be on outcomes, not paperwork. Does the system behave safely? Can affected users understand and challenge decisions? Is there meaningful human oversight where it matters? These are the questions that matter, not whether a specific template was filled in.

What This Means for PMs

If you are a Product Manager working on anything that touches AI, this is your problem now. Not legal’s problem. Not compliance’s problem. Yours.

The best PMs I know have always understood that constraints are not obstacles; they are design inputs. A screen size constraint forces better information hierarchy. A performance budget forces cleaner architecture. A regulatory requirement forces you to think about who your product affects and how.

Here is what I would do right now:

Assess your risk classification early. During discovery, not after launch. If your product touches hiring, credit, education, healthcare, law enforcement, or critical infrastructure, assume you are high-risk until proven otherwise. Build that assumption into your PRDs from the start.

Design for explainability from day one. If you cannot explain why your system made a decision, you have a product quality problem regardless of what the EU thinks. Explainability is not just a compliance feature. It is a trust feature, and trust drives retention.

Own compliance as a product concern. As Ian McAllister said on Lenny’s Podcast: “The more you grow, you have to increasingly find the constraints or barriers to your success and knock them down no matter what they are.” Do not wait for legal to hand you a checklist. Understand the requirements yourself and factor them into your roadmap.

Watch the US convergence. Colorado’s SB 24-205 introduces risk management policies, algorithmic impact assessments, and consumer notice mechanisms. This is not an EU-only trend. The direction of travel is clear globally, and PMs who treat EU compliance as an isolated European problem will find themselves retrofitting again when similar requirements land closer to home.

The Bottom Line

I remain deeply optimistic about AI. The technology is transformative, and the potential to improve lives at scale is real. But potential and impact are not the same thing. The gap between them is filled with product decisions, and those decisions need guardrails.

The EU AI Act is imperfect, but its core instinct is right: consequential AI systems should be transparent, explainable, and accountable. These are not anti-innovation principles. They are pro-trust principles. And in the long run, trust is the foundation that sustainable innovation is built on.

The PMs who treat compliance as a checkbox will resent it. The PMs who treat it as a product constraint, one that forces clearer thinking, better architecture, and stronger user trust, will build better products because of it.

We should not be choosing between the American model of unchecked speed and the European model of cautious restraint. We should be applying the same principles we use in product development: encourage experimentation, accept that risk is inherent, but apply lightweight governance to guide the flow of value without blocking it.

That is not a regulatory philosophy. That is just good product management.